Three months into running UCPChecker, the most common follow-up question we get from anyone reading a status report is the same: "OK, but how does that compare to [other store]?"

That question comes from everywhere. Developers picking which store to integrate with first. Analysts tracking which platforms are pulling ahead in UCP coverage. Store owners benchmarking against direct competitors. Marketing teams putting together pitch decks about why their stack is more agent-ready than the next. Platform vendors comparing their hosted ecosystem against rival platforms. AI agent builders deciding which retailers to feature in demo flows.

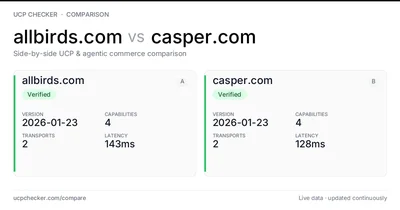

None of these audiences really care about a single store's UCP coverage in isolation. They all care about how it stacks up against another. Whether Allbirds is more agent-ready than Casper. Whether Boden's Shopify implementation goes deeper than Born for Fashion's. Whether the brand they're about to integrate with has more capabilities than the one they're already integrated with. The interesting answer is always relative.

Until today, the only way to get that answer on UCPChecker was to open two browser tabs and squint. So we built the thing people were already trying to do manually.

Compare any two UCP stores side-by-side at ucpchecker.com/compare →

What it does

Pick two domains. Get every measurable UCP attribute laid out side by side in a single scannable view.

- Headline metrics: status, UCP version, latency, capability count, transport count, payment handler count, HTTP status, robots.txt policy, platform. Quantitative cells highlight the leading side with a soft green left-border, so you can scan winners without reading numbers.

- Capability matrix: every UCP capability declared by either store, bucketed into "Both stores", "A only", and "B only". Each chip links straight to the capability's deep-dive page, so if you spot a gap you can immediately see what it is and why it matters.

- Transport diff: same Both / A only / B only treatment for REST, MCP, A2A, and Embedded.

- AI bot access matrix: GPTBot, Google-Extended, ClaudeBot, Applebot-Extended, and CCBot — allowed, blocked, or unknown for each store.

- Payment handlers: which payment methods each manifest declares, including the ones one side has and the other doesn't.

- Pick another: tiny inline form pre-filled with the current side A so you can swap side B and re-run instantly.

- Related comparisons: auto-suggested by capability overlap with side A — a useful map of who else is building similar agent surface in the same space.

- Status-aware FAQ: the questions change depending on the matchup. Two verified stores get a different lead question than one verified vs one not-detected.

It's free, public, indexable, and works with any domain. If a store isn't already in our directory, we run a live check the first time you compare it.

Why we built it

Two reasons. The first one is the most honest.

Right now, UCP coverage is a moving target. Some stores have everything declared — checkout, cart management, identity linking, payment tokens, multiple transports. Other stores have a single capability and a single transport, technically verified but barely useful to an agent. The directory grade tells you "verified" or "not". It doesn't tell you whether one verified store is significantly more agent-ready than another.

That difference matters more every week. The teams building agentic commerce tooling — the ones picking which stores to index first, which to feature in demo flows, which to recommend to their users — they need a relative view. They need to know that allbirds.com's manifest goes three levels deeper than the manifest of an otherwise equivalent store. They were already opening two status pages and comparing fields by hand. We watched it happen in user sessions. Compare just makes that workflow native.

The second reason is more strategic. We've been quietly building the infrastructure for what we think will be the most important question in agentic commerce as it matures: not whether a store is verified, but who's pulling ahead. Compare is the first user-facing surface that exposes that question directly. There will be more.

How we built it (the short version)

The data was already there. Every Merchant in our database has its capabilities, transports, and payment handlers loaded as proper many-to-many relationships. The computation is just three set operations per relation: intersect, left-only, right-only. The hard part was deciding what to compare and how to render the diff so two columns of dense data still feel scannable on a phone.

Some of the design decisions worth calling out:

Alphabetical canonical URLs. /compare/casper.com/vs/allbirds.com 301-redirects to /compare/allbirds.com/vs/casper.com. Without that, every store-pair would generate two URLs and split its link equity in half. Pretty URL, single canonical, no duplicate content.

Sync check on first visit. If you compare a domain that isn't in our database yet, we run a fresh UCP check inline before rendering. The compare page never shows "no data" — it always has something to compare. Same pattern as the per-store status pages.

Noindex when neither side is verified. Two non-verified stores produces a thin page that would just pollute the search index. Those pages still work for visitors who land on them — they just don't get crawled. As soon as either side becomes verified, the page flips to indexable automatically.

A "winner" highlight, not a "winner" badge. The leading side on a quantitative metric (lower latency, more capabilities, fresher check) gets a gentle green left-border on its cell — but we never write the word "better" or "worse" anywhere. The data speaks for itself, and "better" isn't a value judgment we want to be making about other people's stores.

Status-aware FAQ that mirrors visible HTML to JSON-LD. Every compare page emits a real FAQPage schema with the same questions and answers a human reader sees. The FAQ branches based on the matchup so the lead question is always relevant to what you're looking at.

Try it

Four pairings we've been opening manually for weeks. Each one is a live embed of the actual comparison — the same data refreshes every 24 hours from our crawler.

allbirds.com vs casper.com — two well-known DTC brands, see how their capabilities differ.

boden.com vs kyliecosmetics.com — both verified Shopify stores, compare their depth.

hairlust.com vs thebodyshop.com — beauty vs hair, both verified.

bornforfashion.com vs casper.com — fashion vs sleep, contrasting capability surface.

Or just start typing two domains into ucpchecker.com/compare. Autocomplete suggests from the verified directory.

What's next

A few obvious extensions we're sitting on:

- Embeds need more testing in the wild. We've shipped iframe and Markdown embeds and validated them locally, but the real test is seeing them deployed across Substack, Medium, Notion, GitHub READMEs, and the hundred CMSes we don't have on our test bench. If you embed a comparison and the layout breaks, tell us — we'll fix it fast.

- postMessage iframe auto-resize. Right now embeds use a fixed iframe height (900px by default). Comparisons with sparse capability data leave whitespace; comparisons with dense data sometimes scroll. The cleanest fix is a postMessage handshake from the embed to the host page so the iframe sizes itself to its content. On the list.

- Three-way and N-way comparison. Two columns is the right default — past two, the visual gets cramped — but for "which of these five Shopify stores has the deepest UCP implementation" type questions, we'll likely add a tabular wide-mode behind a separate URL.

Compare is the first product surface we've shipped that frames UCP coverage as a relative thing rather than a binary verified/not. It changes what you can ask. We're already seeing internal queries we couldn't run before — "show me every verified store that has cart management but is missing identity linking" is one diff away from being a real question someone outside our team can answer.

If you build something with it, or if you find a comparison that surprised you, let us know. The interesting comparisons are the ones we haven't thought to run.